The end of AI is nuclear power.

At the US-Saudi Arabia Investment Forum on November 20, Musk immediately exposed the "Emperor's New Clothes" of the AI industry: "The bottleneck of AI is not money and algorithms at all - there is no shortage of investors pouring money into it, what is lacking is the electricity to power AI and the data centers to install computing power."

This is no exaggeration: In Washington state's data centers late at night, H100 GPUs flash more brightly than disco lights while training GPT-6; in Texas's AI industrial park, the electricity generated by Sora to produce one second of video is enough for 17,000 American households for a day.

The tech giants have already voted with their actions: Microsoft signed a 20-year nuclear power agreement, and Google directly bought nuclear reactors. It's clear to everyone: for AI to run continuously, it needs nuclear power as a "perpetual motion machine power bank."

AI isn't an "energy hog," it's a "24-hour energy-consuming monster."

Who would have thought that AI, which started with code, would now become a "nightmare" for the power system?

Its hardware is incredibly powerful: a regular server rack can only handle 14 kilowatts, while an AI rack can handle 40-60 kilowatts – equivalent to cramming the electricity of an entire building into a metal cabinet.

The training of large models is even more extravagant: a single GPT-5 training session consumes 100,000 megawatt-hours, enough for a medium-sized city to enjoy for a week—with all the streetlights on and the air conditioning running at full blast, it still wouldn't run out.

Daily operations are also fuel-intensive: ChatGPT consumes over 500,000 kilowatt-hours of electricity per day, which is 17,000 times the average daily electricity consumption of an American household.

What's even worse is the inference end: training takes a few months, but inference takes several years, and in the long run, it consumes more power than training, making it a "buy one get one free power-guzzling package".

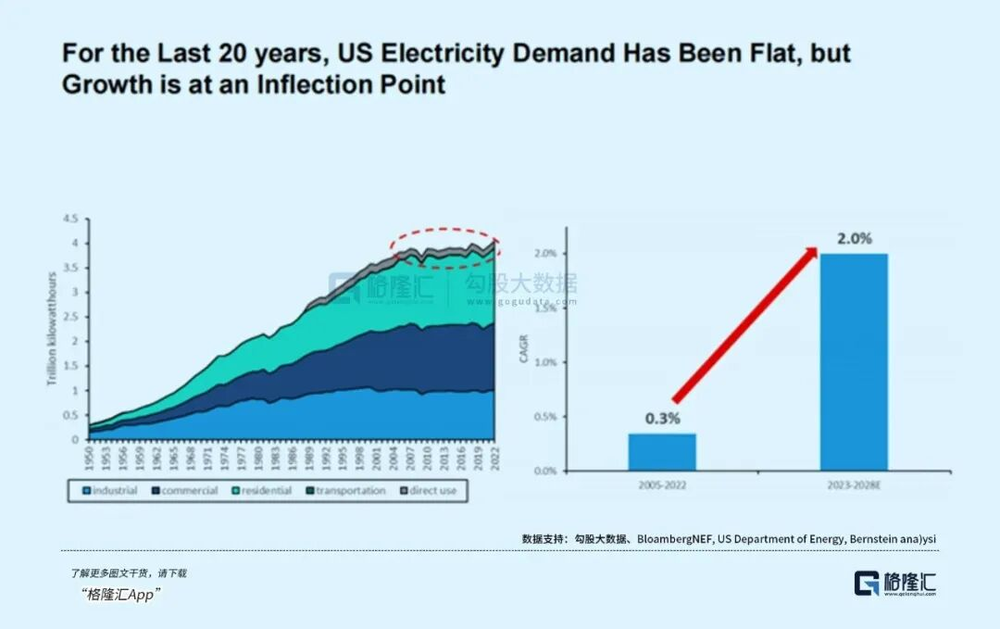

Data centers in the United States currently account for 2.5% of total electricity consumption, and this figure is projected to reach 7.5% by 2027 and possibly 15% by 2028.

Morgan Stanley estimates that global data center power consumption will be 430-748 terawatt-hours from 2023 to 2027, accounting for 2%-4% of global electricity consumption, while the power demand for generative AI will increase by 105% annually.

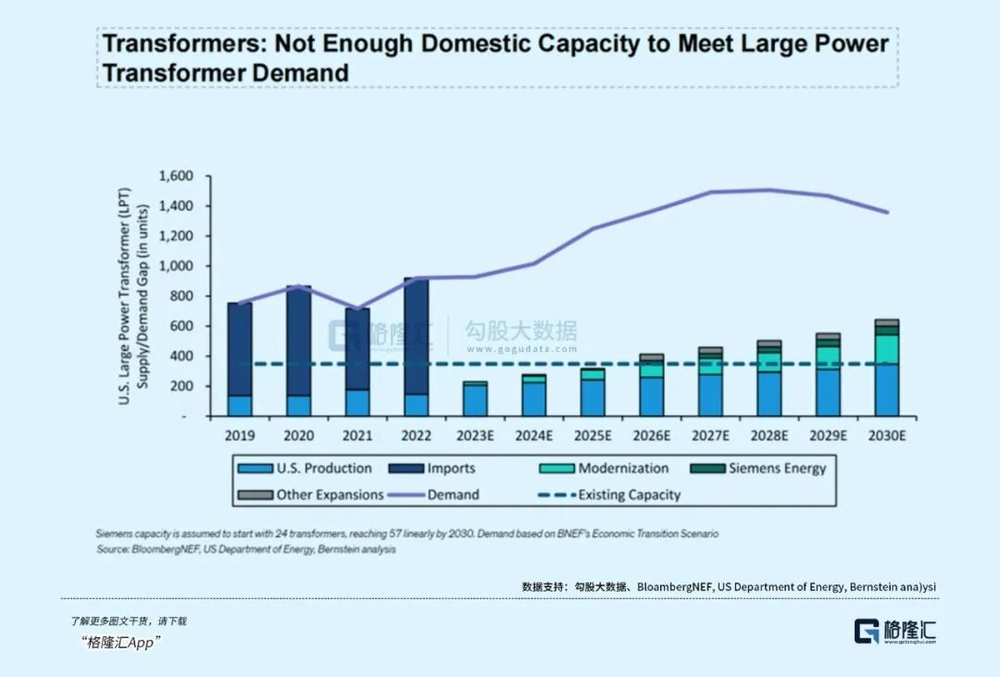

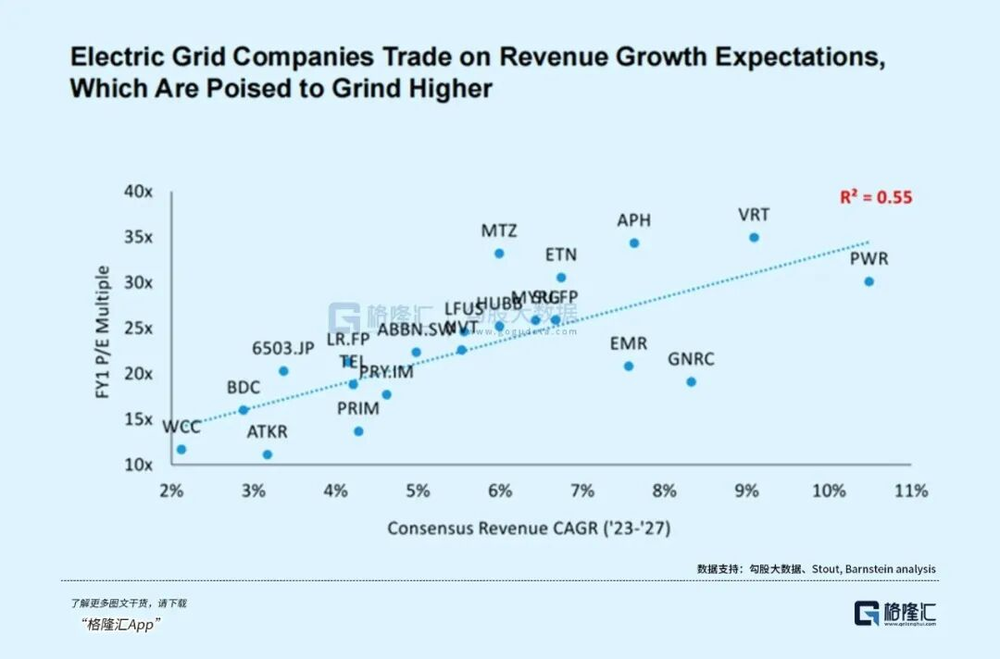

The most disheartening aspect is the disconnect in infrastructure development: building a data center takes 2 years, a power plant takes 3-5 years, while a long-distance, high-capacity transmission line takes a staggering 8-10 years. AI iterates annually, while power grids are built in ten-year increments, making it impossible to keep up.

Wind and solar renewable energy: Looks promising, but actually "unreliable".

Some people say, "Wind and solar power are green and environmentally friendly," but when they actually use them, they find that they are "good-looking but impractical."

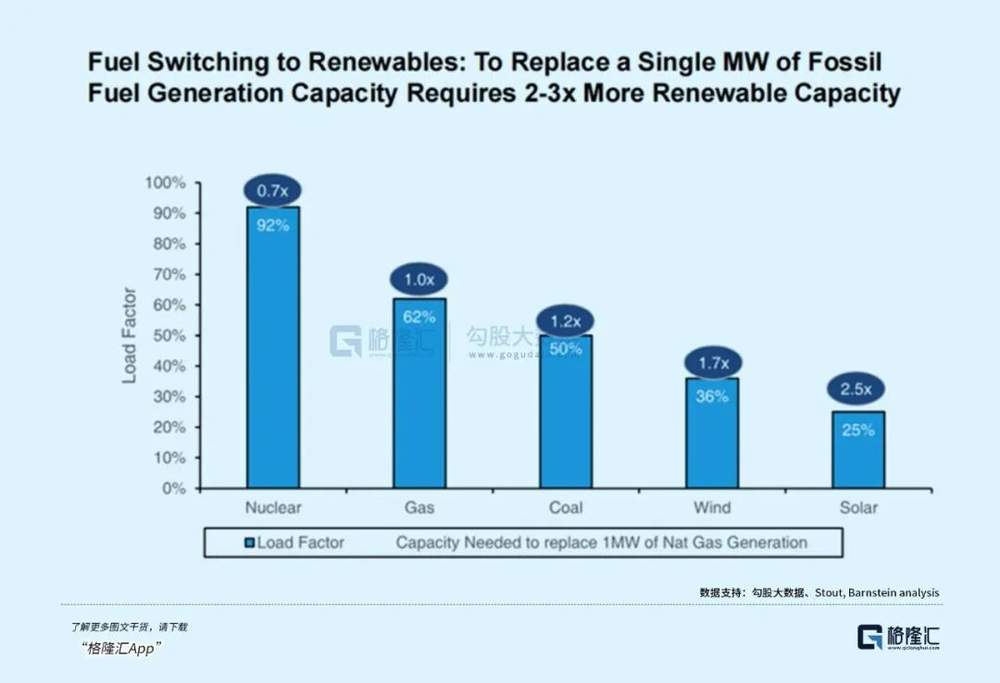

Wind power is used only when the wind is blowing, with an annual utilization rate of only 36%; solar power is used only when the weather is sunny, with an annual utilization rate of only 25%.

But AI needs to be online 24/7: the robotic arms in smart factories can't stop, or the production line will shut down; remote medical data can't be interrupted, or treatment will be delayed; if Sora loses power, all the previous computing power will be wasted, and anyone would be furious.

To ensure stable wind and solar power, energy storage equipment is essential, which directly increases the overall cost.

California's NEM3.0 policy, introduced in 2022, cut the price of feeding surplus electricity from residential solar PV back to the grid by 75%, extending the payback period for residential solar power from 5-6 years to 9-10 years.

For data centers, the cost of energy storage is astronomical—the investment to store enough power to support the continuous operation of tens of thousands of GPUs may be higher than the data center itself.

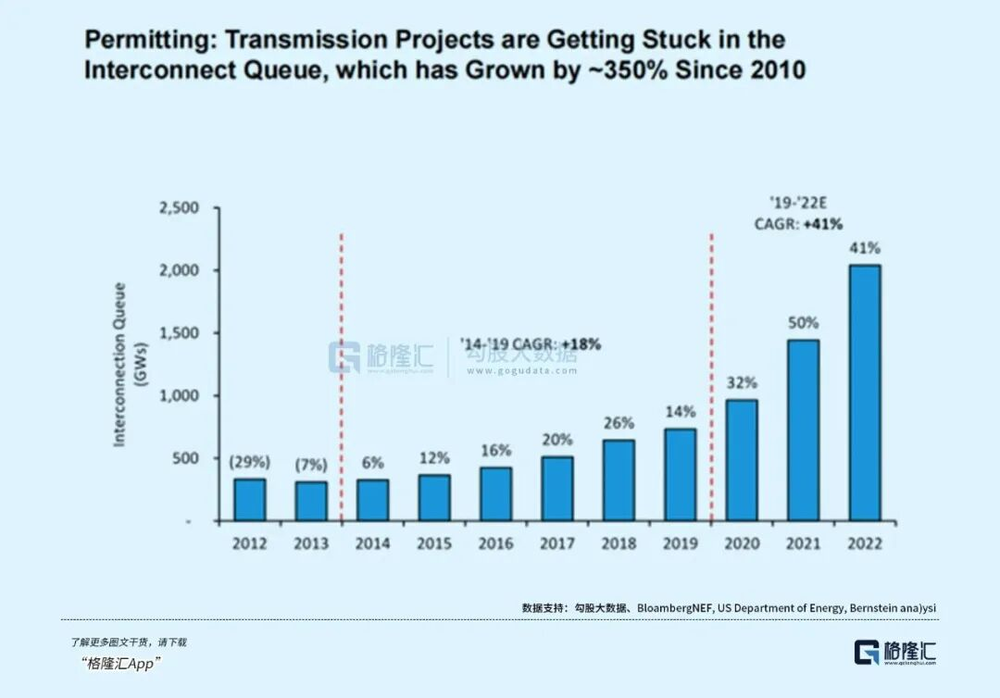

More importantly, it is extremely difficult to connect new energy sources to the power grid. The queue of power transmission projects in the United States has increased by 350% since 2010. Many new energy power plants have been built, but they cannot supply power because they cannot be connected to the grid.

The supply of new energy sources cannot keep up with the growth rate of AI. The total power generation in the United States has remained relatively stable at 4,100 terawatt-hours over the past 10 years, while Europe has only reached 3,120 terawatt-hours. However, the new demand from AI data centers alone will exceed 100 billion terawatt-hours in the next few years.

Although the installed capacity of wind power and photovoltaic power is increasing, it is difficult to achieve explosive growth in the short term due to factors such as land and environment.

Even more awkwardly, the existing power systems in Europe and the United States are outdated, nuclear power generation in the United States has been declining continuously since 2020, coal power is gradually being retired, and new energy sources are not keeping up, creating a supply vacuum of "old is being retired and new is not enough", which simply cannot support the computing power boom of AI.

Nuclear power's comeback: From a "washed-up internet sensation" to a "hot commodity" for tech giants.

Just when things seemed to be in a dilemma, nuclear power suddenly became popular again—previously, people were afraid of safety issues when it came to nuclear power, but now it has become a major asset that Microsoft and Google are vying to partner with.

Nuclear power is the "king of energy": with an annual utilization rate of 92%, it works harder than programmers working 996, and it doesn't stop even when it's windy or rainy.

AI is perfectly suited to this approach: training requires concentrated computing power, inference requires long-term operation, and it can even be powered by nuclear power.

Even more thoughtful is that nuclear power plants can be built next to data centers without relying on long power transmission lines—essentially, "a power bank can be plugged directly into a mobile phone."

Nuclear power is also quite green: it emits almost no carbon dioxide during operation, and the energy from the fission of 1 kilogram of uranium-235 is equivalent to 2,700 tons of standard coal.

A one-million-kilowatt nuclear power plant uses tens of tons of nuclear fuel annually, enough to power a large data center for a year.

The giants have already voted with real money:

- In September 2024, Microsoft signed a 20-year agreement with Star Power to exclusively supply AI data centers;

In October, Google ordered 6-7 small nuclear reactors from KairosPower, with a total capacity of 500 megawatts;

Following that, Amazon invested $500 million in nuclear power company X-Energ, with plans to build 5 gigawatts of nuclear power by 2039.

The logic is simple: in the end, the AI competition comes down to power stability, and nuclear power is the only safety net.

Nuclear power + AI: A perfect combination for mutual benefit

Don't think that AI is unilaterally taking advantage of the situation; these two are a "couple" that complements each other.

AI makes nuclear power smarter:

- Real-time monitoring of reactor data allows for fault detection up to 30 days in advance, reducing downtime by 30%;

- Algorithms optimize fuel ratios and improve nuclear fuel utilization;

- Build digital twin models to simulate extreme scenarios and mitigate risks.

After the GEV nuclear reactor was equipped with AI, its efficiency improved and its operation and maintenance costs decreased by 12%—the old factory replaced its production line with an automated one.

Nuclear power can liberate AI:

Previously, AI data centers could only be crammed into big cities, but now with small modular nuclear reactors (SMRs), they can be built directly next to data centers.

Google has ordered SMRs, which will be placed in its AI industrial park to completely eliminate its dependence on the power grid.

Nuclear power can bring AI to rural areas:

With stable nuclear power in Africa, AI educational tablets and diagnostic equipment can be introduced into rural areas; remote mining areas rely on nuclear power for energy, and AI monitoring operates 24 hours a day.

Adding to the context of carbon neutrality: coal power is being phased out, nuclear power is a clean baseload power source, and the demand for AI has opened up new markets for nuclear power.

It is predicted that by 2030, global AI computing power will be 500 times that of 2020, and nuclear power demand will increase by 3 to 5 times.

With the US Inflation Reduction Act providing subsidies for nuclear power and SMR technology maturing, nuclear power is entering a "golden age."

AI surges forward, nuclear power provides a safety net.

Musk has revealed the truth: AI is lacking a reliable power supply.

When AI computing power breaks through the sky, wind and solar power can't keep up, and the power grid can't keep up, nuclear power becomes the only solution.

"The end of AI is nuclear power" is not just a slogan, but an industrial logic: AI needs stable electricity, and nuclear power provides it; nuclear power needs to improve efficiency, and AI helps.

The giants' move is a long-term bet: whoever locks in nuclear power first will gain an advantage in the AI race.

In the future we will see:

AI data centers are becoming neighbors with nuclear power plants;

-SMR acts as a "power bank" for edge computing;

- African children use AI tablets to learn, and remote mining areas rely on AI to ensure safety.

Those who say "electricity will peak" haven't seen how fast AI consumes electricity; those who say "new energy can replace everything" haven't experienced the pitfalls of power outages and reduced computing power.

AI has no ceiling, and the demand for electricity has no end; nuclear power is the "perpetual motion machine power bank" that can keep feeding it.

When AI code meets nuclear power, humanity will truly have the foundation for the next stage of its voyage to the stars.